Controlling robots

The ‘iriss’ mission saw ESA astronaut Andreas Mogensen continue pioneering work to control robots and hardware from the International Space Station.

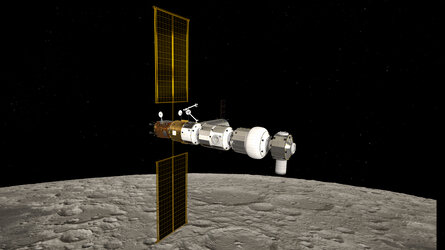

Space exploration will most likely involve sending robotic explorers to ‘test the waters’ on uncharted planets before sending humans to land and ESA is preparing for that future.

Controlling a rover on Mars is a real headache for mission controllers because commands can take an average of 14 minutes to reach the Red Planet.

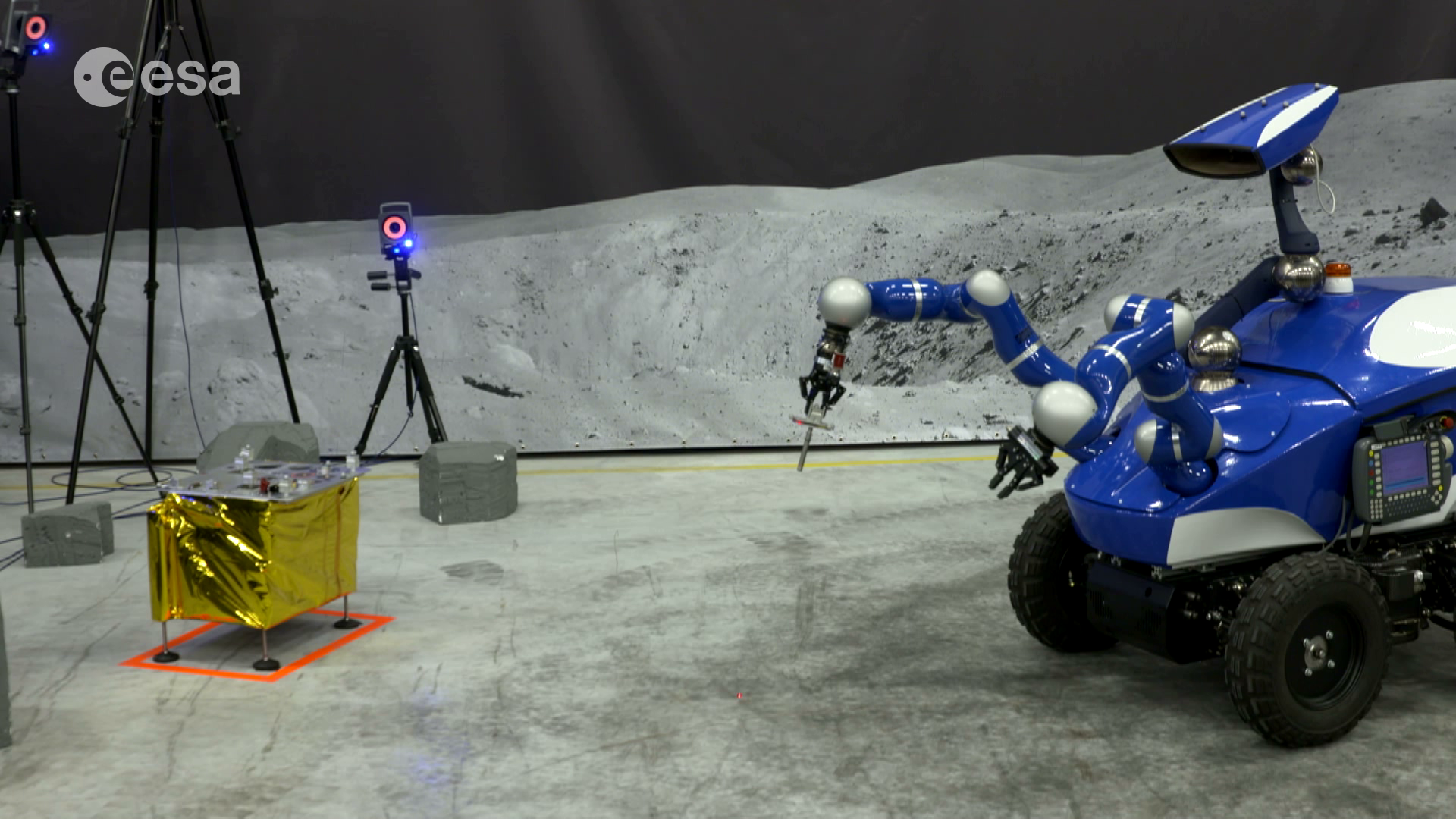

A project called Meteron is developing the tools to control robots on distant planets while astronauts orbit above. This includes developing a robust space-internet, designing the software to control the robots and developing the interface hardware.

Eurobot

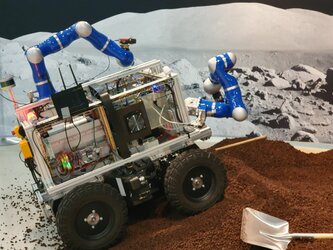

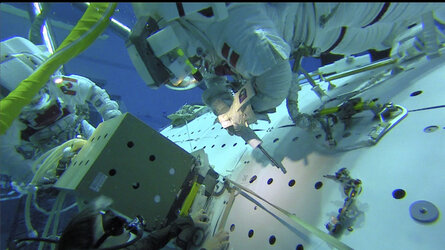

The car-sized rover Eurobot, located at ESA’s ESTEC facility, has been used in several experiments steadily enlarging its capabilities as each experiment has been a success. Andreas continued work done by NASA astronaut Sunita Williams, who first tested the network connection from the International Space Station in 2012 when she commanded a small Lego rover at ESA’s operations centre in Darmstadt, Germany.

Two years later, ESA astronaut Alexander Gerst commanded the Eurobot in ESA’s technical heart in Noordwijk, the Netherlands. Circling Earth 400 km above, Alexander controlled the robot and received images and telemetry from it.

Andreas instructed Eurobot to interact with other objects to perform a variety of tasks, including unfurling a solar panel on a mock-up lander module in the Meteron SUPVIS-E experiment on 8 September 2015. During the experiment, he also operated another smaller rover and its camera to gain situational awareness while he monitored Eurobot’s progress.

This experiment is ramping up the complexity and is as much a test of the user interface, known as Elios, to control the rover as anything else. Andreas monitored and controled two rovers at the same time using just one laptop from 400 kilometres away in space!

Haptics-2

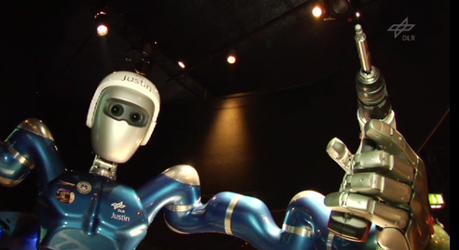

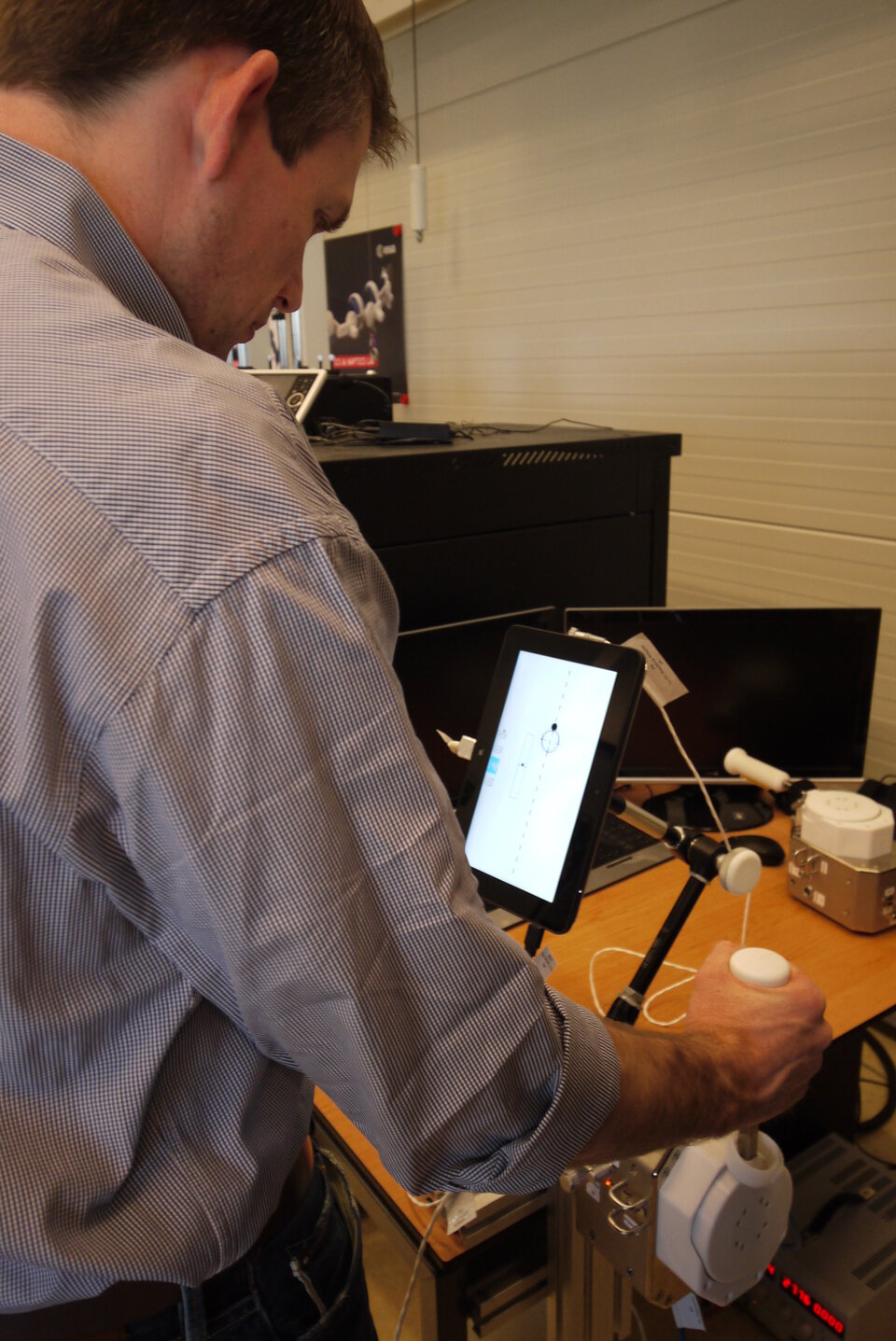

Concentrating more on the interface between the astronaut and the robot, the Haptics-2 experiment saw Andreas control a rover with an added sense – touch.

ESA performed the first-ever demonstration of space-to-ground remote control with live video and force feedback 3 June 2015 when NASA astronaut Terry Virts on the International Space Station shook hands with ESA telerobotics specialist André Schiele in the Netherlands.

Andreas used a joystick on the Station that is a twin of one on Earth and moving either makes its copy move in the same way. This allows astronauts in space to ‘feel’ objects from hundreds of kilometres away and provides feedback so both users can feel the force of the other pushing or pulling.

As the Space Station travels at 28 800 km/h, the time for each signal to reach its destination changes continuously, but the system automatically adjusts to varying time delays.

In the Interact experiment, Andreas first directed a more advanced robot on the ground to a control board and then used the touchy-feely joystick to insert a peg into a hole. This was the first real-life test on 7 September 2015 of the equipment in a situation similar to that imagined on another planet.

Controlling robots closer to home

Research into controlling robots from afar with robust communications has great potential for use on Earth. Whether searching for earthquake survivors, investigating nuclear fallout or exploring the bottom of our oceans or volcanoes, these are situations that would all benefit from robotic explorers that can be controlled with limited communications networks.

Both the system’s adaptability and robust design mean they are well-suited for remote areas that are difficult to access or when disasters have destroyed communication networks.

The direct and sensitive feedback coupled with safeguards against excessive forces would allow rovers and robots to carry out delicate operations in the extreme conditions found in offshore drilling and nuclear reactors, for example.

Access the video