Pinpoint vision-based landings on Moon, Mars and asteroids

A new vision-based navigation software system for autonomous planetary landings, tested at ESA using a scale-model Moonscape has been recognised for excellence by France’s ONERA aerospace centre.

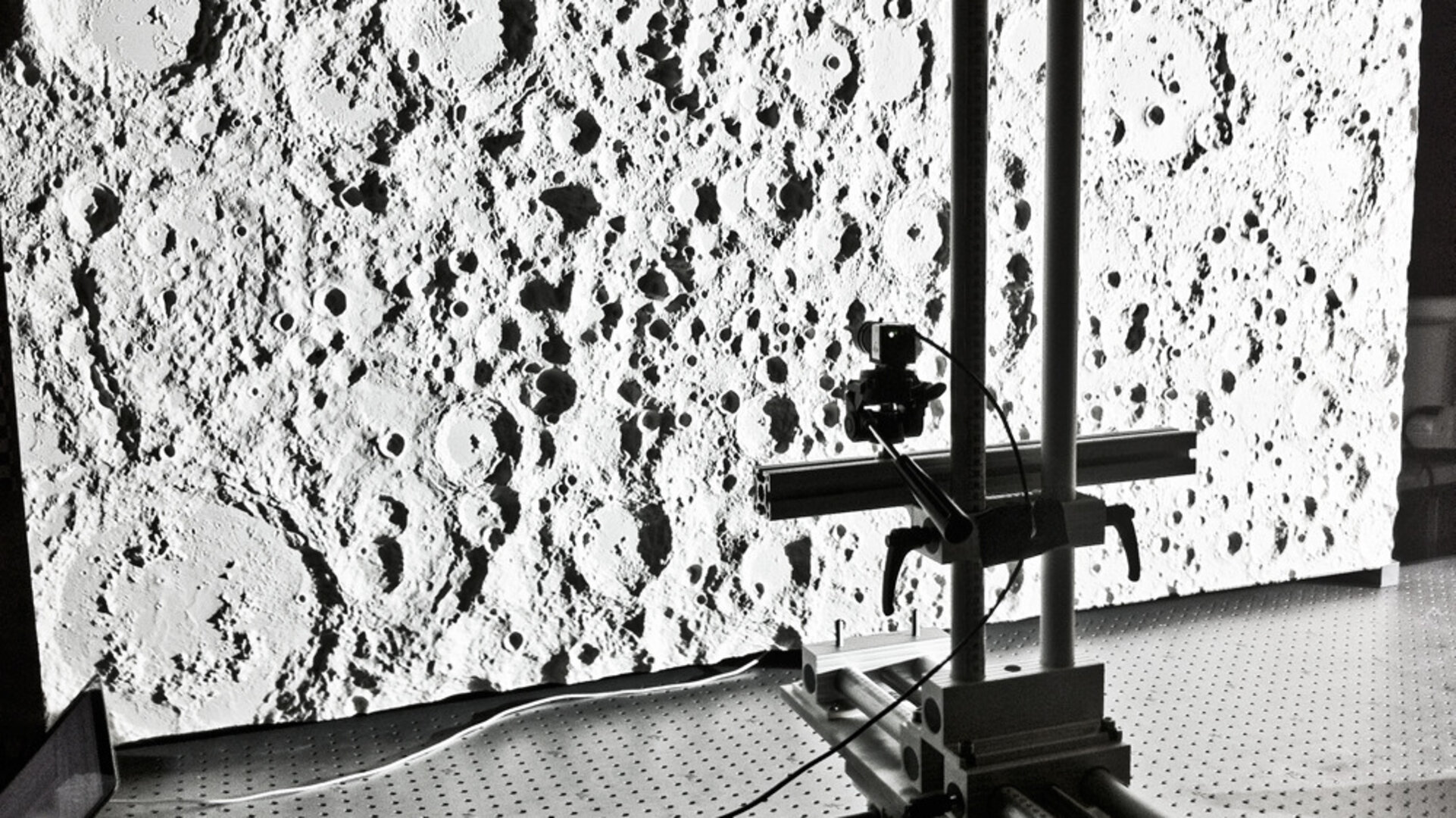

The landing software was evaluated in real life using a compact scale model of the lunar surface linked to a mobile camera, set up in ESA’s Control Hardware Laboratory, located in the ESTEC technical centre in Noordwijk, the Netherlands.

PhD student Jeff Delaune’s three-year research has been recognised by French aerospace research centre ONERA – awarded as one of their six top PhDs of the year. His PhD was co-sponsored by ONERA and Astrium Space Transportation in France.

“The point of my work has been to determine the location of a probe landing on another planet, basically fulfilling the function of GPS here on Earth – not something available on the Moon, Mars or asteroids,” explains Jeff.

“And we want to do it using only a simple camera, a piece of equipment already readily available on landing missions.

“If I’m a tourist in Paris, I might look for directions to famous landmarks such as the Eiffel Tower, the Arc de Triomphe or Notre Dame cathedral to help find my position on a map.

“If the same process is repeated from space with enough surface landmarks seen by a camera, the eye of the spacecraft, it can then pretty accurately identify where it is by automatically comparing the visual information to maps we have onboard in the computer.”

All this information is crucial for the performance of the autopilot in order to enable pinpoint landing, namely landing within 100 m of a target – while current missions are limited to accuracies of a few kilometres at best.

Some of the biggest challenges include ‘scalability’ – the view from orbit at 100 km up might often appear very different as the lander comes closer to the ground as well as handling the relief of the terrain.

The approach taken with this ‘Landing with Inertial and Optical Navigation’, LION, system compares the images the camera is acquiring with maps built up by previous orbital missions, plus 3D renderings of the surface topography called ‘digital elevation models’ (DEMs) which are fully exploited down to the very core of the system to handle relief variations.

The apparent size of each landmark is also measured in the image, in order to avoid confusing a simple boulder with a mountain top.

“All necessary images and 3D topography would have already been gathered by a previous mission,” Jeff adds.

“For example, NASA’s Lunar Reconnaissance Orbiter has acquired high-resolution imagery of regions of interest on the Moon, such as the poles, while using a laser altimeter to build up detailed topography maps.”

When evaluating LION’s navigation accuracy, it turned out that pure software validation based on virtual images was insufficient. Key error contributors to the final navigation performance were associated with the camera properties and the terrain map itself.

“Testing LION with real images of a known planetary surface was an essential step to demonstrate its actual performance and understand the various error contributors,” explains Thomas Voirin of ESA’s Guidance Navigation and Control section, managing this research project from the Agency side.

The testbed includes a high-resolution scale lunar model, built by the DLR German Aerospace Center in Bremen, Germany, a rigid camera support with three axes of freedom, and a Sun-like light source, all mounted on a highly stable optical table.

“With this setup we have been able to demonstrate position accuracy better than 50 m at 3 km altitude at scale on real hardware,” Thomas adds.

Designing this physical testbed was a challenging task in itself. Demonstrating pinpoint landing required the testbed to have an intrinsic positioning accuracy at least ten times better than the expected navigation performance, typically 10 m at lunar scale.

“Our testbed needed to fit in a reduced available space of 2 x 1 m within ESTEC’s Control Hardware Lab, so we had to scale everything down – including the 10 m positioning accuracy requirement,” Thomas explains.

“This scales down to a positioning accuracy of less than a millimetre, which was only achievable in our laboratory with the use of optical correlation of real images of the lunar model with virtually-rendered image of the same scene generated with PANGU software, a specialised ESA planetary scene generation software developed by the University of Dundee.”

Jeff was a trainee in ESA’s former Lunar Lander team, gaining an interest in navigation which inspired his PhD.

He is now working on applying his algorithm to terrestrial vehicles, such as helicopters and quadcopter mini-unmanned aerial vehicles, as well as consumer devices: “All the instruments a interplanetary lander would need are also there on the smartphone in your pocket, so there is also broad terrestrial potential for this space-inspired technology.”

The project is co-sponsored by Astrium Space Transportation of France, ONERA and ESA. It was undertaken through ESA’s Network/Partnering Initative, which supports work carried out by universities and research institutes on advanced technologies with potential space applications, with the aim of fostering increased interaction between ESA, European universities, research institutes and industry.

Germany

Germany

Austria

Austria

Belgium

Belgium

Denmark

Denmark

Spain

Spain

Estonia

Estonia

Finland

Finland

France

France

Greece

Greece

Hungary

Hungary

Ireland

Ireland

Italy

Italy

Luxembourg

Luxembourg

Norway

Norway

The Netherlands

The Netherlands

Poland

Poland

Portugal

Portugal

Czechia

Czechia

Romania

Romania

United Kingdom

United Kingdom

Slovenia

Slovenia

Sweden

Sweden

Switzerland

Switzerland