Human dependability: spacecraft controllers

For spacecraft operation many tasks are automated, with modern satellite systems performing a growing number of activities themselves, and to a certain extent protected from ruinous errors through underlying design provisions such as “failure detection, isolation and recovery”.

Spacecraft operators receive a very high level of training. ‘Over-experience’ with a system might actually pose a greater threat, with overconfidence leading to drifting attention. As a result, the operations design of space systems typically includes detailed procedures and checklists which must be rigorously adhered to.

So for sending commands to satellites, a two-step approach similar to ‘arm and fire’ is used. The principle is the same as a mobile phone where it is required to unlock it before pressing a number, to prevent accidental calls.

The implementation in practice is more sophisticated, but it comes down to the fact that before sending anything to the satellite a confirmation is required together with additional authorisation for telecommand functions recognised as hazardous.

Working reliably with people in orbit

With ESA’s human spaceflight activities, the possibility of human error is increased because there are operators in orbit, as well as on the ground – and the stakes of any mistake are correspondingly high.

Astronauts therefore undergo years of training and simulations concerning all International Space Station (ISS) systems, as do ground controllers. The outcomes of these activities also represent useful raw data as to where real-life errors are more likely to take place, feeding back into subsequent error control work.

All ESA human spaceflight systems share a common stringent approach in terms of ‘failure avoidance requirements’: no single operator error or other failure shall result in damage to equipment or injury to personnel, and no combination of two operator errors or other failures can result in the potential for catastrophic failure on-orbit.

Lionel Baize of the French space agency CNES briefed the workshop on how some minor operator errors were identified during the first flight of the Automated Transfer Vehicle (ATV) to the ISS in 2008 – this ESA vehicle being operated from a CNES-run control centre in Toulouse.

Crucially, because of the ATV’s failure avoidance systems, these operator errors had no practical consequences and the mission was a conspicuous success. In addition, the captured errors guided system improvements to prevent them occurring again. “Following the mission the ATV control centre was modified and the operational process has been scrutinised and adapted, in particular for routine operations,” said Mr Baize.

Putting space systems together

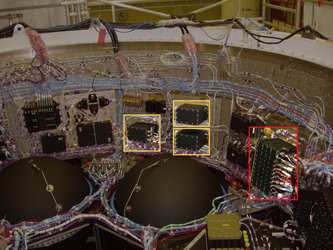

People are the ultimate builders of space systems; any error during design and manufacturing might have extremely serious consequences

People are also the ultimate builders of space systems; any error during the design and manufacturing phases might have extremely serious consequences, taking in everything from coding software to manufacturing electronic parts or structural materials.

Once a ‘non-conformance’ – the term used for an element failing to meet project requirements – occurring due to a human action has been identified, the general aim is to define the root cause why it occurred and prevent it happening again, most often through improved training or procedural changes. All human error non-conformances or other slips must, however, be properly captured and documented so that satisfactory analysis can take place.

Mario Ferrante and Olivier Remondiere of Thales Alenia Space shared their company’s human error prevention strategy for industrial processes. They seek to minimise the possibility of mistakes in the first place through clever design. Employees involved in developing manufacturing instructions or drawing up procedures are given extensive training, while a dedicated risk analysis is also performed early in the design process.

Setting standards

Human dependability is part of overall system safety and dependability and is basically a structured approach to systematically deal with human error identification and prevention.

The eventual outcomes of ESA’s Safety and Dependability section’s structured approach to Human Dependability may in the future include a dedicated guidebook on human error identification and control, issued through the European Cooperation on Space Standardization (ECSS), which works to coordinate shared operational standards within our continent’s space sector.

Current ECSS standards do address system safety, dependability and hazard analysis in general, but not specifically human error – at least not yet.

Germany

Germany

Austria

Austria

Belgium

Belgium

Denmark

Denmark

Spain

Spain

Estonia

Estonia

Finland

Finland

France

France

Greece

Greece

Hungary

Hungary

Ireland

Ireland

Italy

Italy

Luxembourg

Luxembourg

Norway

Norway

The Netherlands

The Netherlands

Poland

Poland

Portugal

Portugal

Czechia

Czechia

Romania

Romania

United Kingdom

United Kingdom

Slovenia

Slovenia

Sweden

Sweden

Switzerland

Switzerland